AI Strategy / Virtual CAIO Services

Separating the Reality of AI from the Hype

AI Strategy & Leadership for the Mid-market

Artificial Intelligence has captured global attention faster than nearly any technology before it, and with it has come both enormous hype and extraordinary potential. For CIO IQ® and Contract CIO+® clients interested in targeted guidance to launch or strengthen an AI program, Innovation Vista offers tailored engagements that separate the reality of AI from the hype, and deliver real business results.

Navigating today’s AI landscape requires not just technical fluency, but also a clear vision for the “art of the possible.” Our experts cast that vision and connect it to your business model, ensuring AI investments align with strategy and drive meaningful outcomes.

Our consultants possess expertise in:

- Large Language Models (LLM)

- Machine Learning (ML)

- Natural Language Processing (NLP)

- Generative AI

- Descriptive AI

- Vision AI / Sensory AI

- Python & R architecture

- Dataset prep & management

- AI governance & privacy

It's not about the technology, but the Results

Driving Business Impact with AI

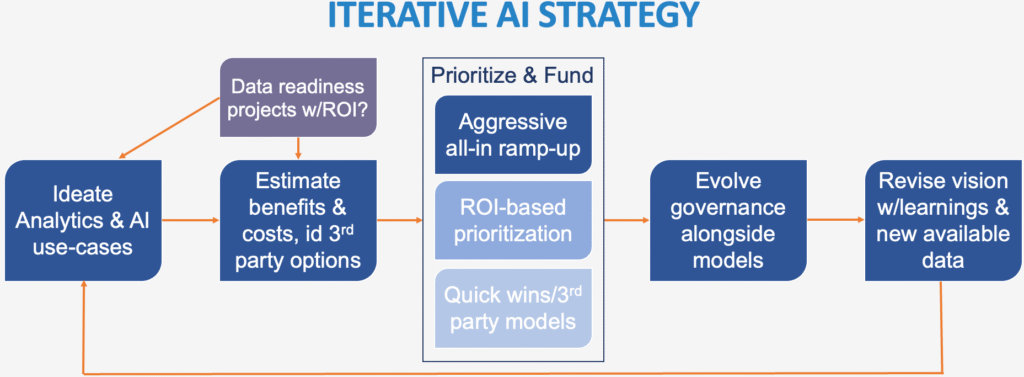

For our consultants, the power of AI is not about the technology itself, it’s about the business outcomes we enable with it. Recent advances in licensed AI platforms and the affordability of hyperscaling cloud services have created tremendous new opportunities, but feasibility doesn’t equal priority. Our AI engagements are designed to extend and expedite the kinds of impact Contract CIO+® is surfacing for our clients, separating “must do” & “should do” AI use-cases from the “could do”, ensuring that all prerequisite data engineering work is included in the planning & analysis, and that AI is applied only where it delivers clear, measurable value.

In a landscape evolving as quickly as AI, with as much hype as genuine potential, the right guidance is essential. We help our clients avoid the traps of wasted investment while capturing the benefits of AI solutions that are aligned with their strategy. Our experience with similar initiatives and our approach to prioritizing AI projects make all the difference. The result is not experiments for the sake of being able to claim usage of AI, but sustainable capabilities that generate efficiency, revenue growth, and competitive advantage.

We are happy to tailor an engagement to provide your organization the best leverage on our expertise. Some common engagement types include:

- Virtual CAIO (vCAIO) Services

- Executive AI brief & strategic overview

- AI strategy development: use-case ideation workshops, feasibility analysis, roadmapping

- AI program launch/oversight

- AI advisory

- AI governance

Successful AI requires constant organized vigilance

Establishing AI Governance Practices

Without strong governance, even well-designed AI programs can drift into risk once the initial excitement and scrutiny fade. Innovation Vista helps clients establish practical, sustainable governance frameworks that protect both their brand and their customers, while ensuring AI programs remain compliant and trustworthy over time.

Our approach begins by aligning with ethical principles, regulatory obligations, and business priorities. We then map the organization’s AI inventory, assess risks for each model, and establish a tiered governance framework covering data provenance, model development, validation, deployment, and monitoring. This structure includes ready-to-use policies, role matrices, and control templates harmonized with industry best practices.

To make governance operational, we integrate data lineage and testing checkpoints into data pipelines, deploy dashboards that highlight bias, drift, privacy, and security risks in real time, and train cross-functional AI Councils to oversee ongoing performance. We also embed “responsible AI champions” within delivery teams and design clear escalation and audit processes to satisfy both internal and external stakeholders.

The result is a governance program that is pragmatic, not bureaucratic – a system that accelerates AI adoption by ensuring confidence and clarity at each step. It turns compliance from a burden into a competitive advantage, ensuring that innovation moves forward responsibly and sustainably.

Latest AI !nsights

Stronger Together Was a Slogan · Now It’s a Strategy Requirement

For two decades, “stronger together” was corporate wallpaper. It announced mergers nobody asked for. It headlined campaign buttons and pep-rally

You Get One Counter-Punch to a Competitor’s AI · Make it Count

The panic response loses. The freeze response loses. Here’s the disciplined third path – How to respond when your competitor

AI Usage is Still Basic · What the First Large AI Usage Study Didn’t Find

OpenAI just released the most comprehensive study of consumer AI usage ever conducted. An NBER working paper backed by Harvard